NVIDIA has announced the A100 PCIe GPU accelerator, which now includes a liquid-cooling option for improved energy efficiency. Liquid cooling, which originated in the mainframe era, is growing in the AI era. A newer variant known as direct-chip cooling, it’s now commonly employed inside the world’s fastest supercomputers.

Liquid cooling is the next stage in accelerated computing for NVIDIA GPUs, which already outperform CPUs on AI inference and high-performance computing tasks by up to 20 times.

You might save 11 trillion watt-hours of energy per year by switching all CPU-only AI and HPC servers throughout the world to GPU-accelerated systems. That’s the equivalent of saving the energy used by over 1.5 million houses in a year.

With the delivery of our first data center PCIe GPU with direct-chip cooling today, NVIDIA adds to its sustainability efforts. As part of a holistic strategy for sustainable cooling and heat capture, Equinix has qualified the A100 80GB PCIe Liquid-Cooled GPU for usage in its data centers. GPUs are currently sampling and will be widely accessible this summer.

Chimneys, which evaporate millions of gallons of water each year to cool the air inside data centers, are being phased out. Liquid cooling promises closed systems that recycle small volumes of fluids and target hot regions.

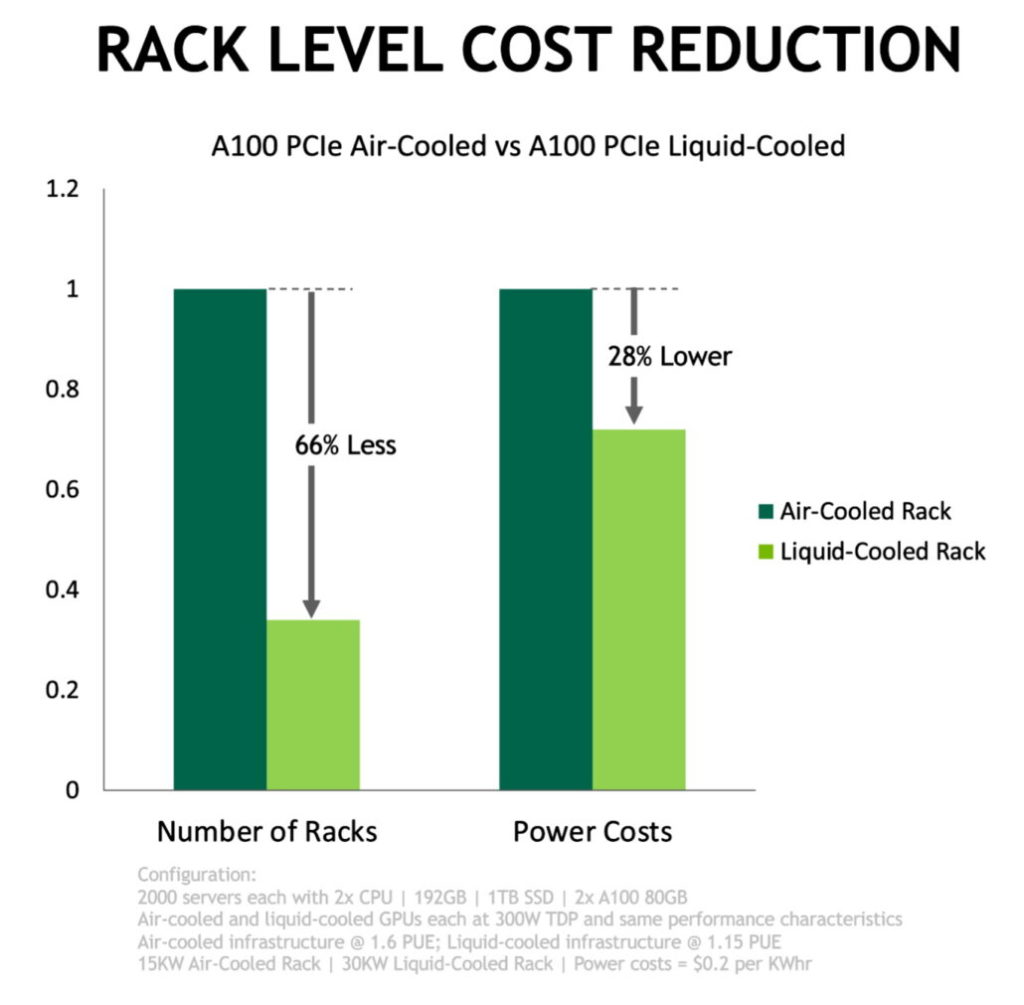

Equinix and NVIDIA found that a data center with liquid cooling could run the same workloads as an air-cooled facility while using around 30% less energy in separate testing. The liquid-cooled data center might achieve a PUE of 1.15, compared to 1.6 for its air-cooled counterpart, according to NVIDIA.

In addition, liquid-cooled data centers can fit twice as much computing into the same amount of space. Because the A100 GPUs only takes up one PCIe slot, air-cooled A100 GPUs take up two.

At least a dozen system manufacturers are planning to use the NVIDIA GPUs later this year

ASUS, ASRock Rack, Foxconn Industrial Internet, GIGABYTE, H3C, Inspur, Inventec, Nettrix, QCT, Supermicro, Wiwynn, and fusion are among the companies represented.

Energy-efficiency standards are being debated in Asia, Europe, and the United States. Banks and other large data center operators are also considering liquid cooling. The technology isn’t just for data centers, either. It is required to cool high-performance devices integrated inside tight spaces in automobiles and other systems.

Today’s liquid-cooled GPUs give the same performance for less energy, making them ideal for rapid adoption. We anticipate that in the future, these cards will offer the option of receiving higher performance for the same amount of energy, as consumers have requested.

also read:

Boeing’s Starliner has finally docked into the International Space Station for the first time