Intel’s Habana Labs team announced two key new products today at the hybrid Intel Vision event: Gaudi2, the second iteration of the Gaudi deep learning training processor, and Greco, the successor to the Goya deep learning inference processor. According to Intel, the processors are significantly faster than their predecessors and the competition. Gaudi2 processors are already available to Habana customers, and Greco will begin sampling to a select group of clients in the second half of the year.

Habana Labs was created in 2016 with the goal of developing world-class AI processors but was acquired by Intel for $2 billion barely three years later. The first-generation Goya inference processors appeared in 2018, when Habana came out of stealth, while the first-generation Gaudi training processors debuted in 2019, just prior to Intel’s acquisition.

While Gaudi and Goya have been offered in a number of form factors over the last few years, these are the first new chips launched by Habana Labs following its acquisition by Intel.

Gaudi2 and Greco by Intel’s Habana Labs have both switched from a 16nm to a 7nm process

The 10 Tensor processing cores featured in the first-generation Gaudi training processor have been raised to 24, while the in-package memory capacity has been tripled from 32GB (HBM2) to 96GB (HBM2E), and the on-board SRAM has been doubled from 24MB to 48MB. The HBM2E in Gaudi2 is “the first and only accelerator that combines such an amount of memory,” according to Eitan Medina, COO of Habana Labs. The CPU has a TDP of 600W (vs. 350W for Gaudi), although Medina claims that it still uses passive cooling and does not require liquid cooling.

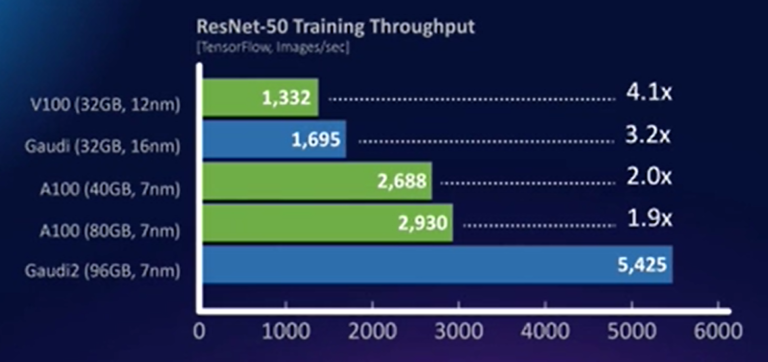

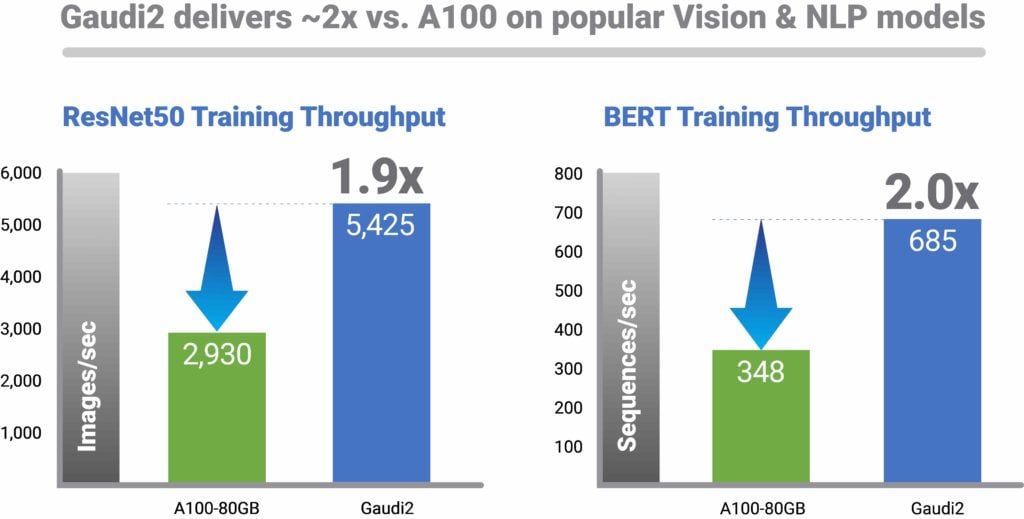

On a few popular jobs, Intel displayed comparisons between Gaudi2, its predecessor, and the competition. On ResNet-50, Gaudi2 achieved 3.2 times Gaudi’s output, 1.9 times an 80GB Nvidia A100, and 4.1 times an Nvidia V100. On some other benchmarks, the gap between Gaudi and the 80GB A100 was considerably wider: Gaudi-2 outperformed the 80GB A100 by a factor of 2.8 for BERT Phase-2 training throughput. “Comparing V100 and A100 is critical because both are heavily used in the cloud and on-premise,” Medina explained.

Gaudi2 is now available to Habana customers in a mezzanine card form factor as well as as part of the HLS-Gaudi2 server, which is designed to help clients evaluate Gaudi2. Eight Gaudi2 cards and a dual-socket Intel Xeon subsystem are included in the server. Habana is collaborating with Supermicro to bring a Gaudi2-equipped training server (the Supermicro X12 Gaudi2 Training Server) to market in Q3 2022, as well as working with DDN to build a variation of the X12 supplemented with DDN’s AI-focused storage. In addition, a thousand Gaudi2 processors have already been deployed to Habana’s datacenters in Israel, where they are being utilised for software optimization and the development of the Gaudi3 CPU.

Habana Labs turned Greco from dual-slot to single-slot mode, lowering TDP from 200W to 75W

“Because of the compact form factor, users will be able to double the number of accelerators in the same host system,” Medina added. Other than that, Intel didn’t share nearly as much about Greco, which is due out in the second half of the year, as it did with Gaudi2.

Gaudi2 and Greco are the newest additions to an increasingly crowded AI accelerator arms race, which includes not only Nvidia’s GPUs, but also additional specialised accelerators like Cerebras, Graphcore, and SambaNova. Of course, Intel’s comparisons of its Habana products to Nvidia products come with a big caveat: they don’t include comparisons against Nvidia’s upcoming H100 GPUs, which promise huge speedups above the A100s and are expected to launch in Q3 2022. Internal competition for Habana comes from Intel’s upcoming Ponte Vecchio GPUs, which are similarly marketed as high-performance AI accelerators.

The new Habana processors are a “prime example of Intel executing on its AI strategy to give customers a wide array of solution choices—from cloud to edge—addressing the growing number and complex nature of AI workloads,” according to Sandra Rivera, executive vice president and general manager of Intel’s Datacenter and AI Group.

Gaudi2 deep learning processors deliver:

Deep learning training efficiency:

The Habana Gaudi2 processor improves training performance by up to 40% in the AWS cloud with Amazon EC2 DL1 instances and on-premises with the Supermicro Gaudi Training Server, and is based on the same high-efficiency first-generation Gaudi architecture. Gaudi2 promises a significant jump in computing, memory, and networking capabilities with a process leap from 16 nm to 7 nm. Gaudi2 also includes a built-in media processing engine for compressing media and unloading the host system. Gaudi2 triples the in-package memory capacity of HBM2E from 32GB to 96GB at 2.45TB/sec bandwidth, and includes 24 on-chip 100GbE RoCE RDMA NICs for standard Ethernet scaling-up and scaling-out.

Customer benefits:

Gaudi2 gives customers a higher-performance deep learning training option than traditional GPU-based acceleration, allowing them to train more while spending less, lowering total cost of ownership in the cloud and data centre. Gaudi2’s faster time-to-train, which can result in faster time-to-insights and faster time-to-market, can help businesses with a variety of model types and end-market applications. Gaudi2 is intended to greatly improve vision modelling in applications such as autonomous vehicles, medical imaging, manufacturing defect identification, and natural language processing.

Networking capacity, flexibility and efficiency:

By increasing training bandwidth on second-generation Gaudi, Habana has made scaling out training capacity cost-effective and simple for customers. Customers may simply scale and adapt Gaudi2 systems to fit their deep learning cluster requirements thanks to the incorporation of industry standard RoCE on chip. Gaudi2 allows users to pick from a wide range of Ethernet switching and related networking equipment due to the system’s implementation on widely used industry-standard Ethernet connectivity, resulting in cost reductions. IT decision-makers who seek to avoid single vendor “lock-in” should avoid proprietary connection technology in the data centre (as offered by competitors). The networking interface controller (NIC) ports are integrated into the chip, which reduces component prices.

Simplified model build and migration:

The Habana® SynapseAI® software suite is designed for developing deep learning models and transferring existing GPU-based models to Gaudi platform hardware. SynapseAI software allows you to train models on Gaudi2 and then infer them on any target, like as Intel® Xeon® CPUs, Habana Greco, or Gaudi2. On the Habana Developer Site, developers may find documentation and tools, as well as how-to content and a community support forum, as well as reference models and a model roadmap on the Habana GitHub. Model migration is as simple as adding two lines of code; for advanced customers who want to develop their own kernels, Habana provides the whole tool package.

About Availability of Gaudi2 Training Solutions:

Habana customers can now purchase Gaudi2 processors. This year, Habana will collaborate with Supermicro to bring the Supermicro Gaudi2 Training Server to market. Habana also partnered with DDN® to provide turnkey rack-level solutions that included a Supermicro server with increased AI storage capacity and the DDN AI400X2 storage solution.

also read:

TSMC to move forward with the 1.4nm process next month to get a lead on its competitors