In the rapidly evolving landscape of artificial intelligence, efficiency is the new currency. As AI models become increasingly complex and computational demands skyrocket, the need for intelligent, cost-effective inference solutions has never been more critical. Enter NVIDIA Dynamo – a groundbreaking open-source inference software that promises to transform how AI factories process and generate tokens, potentially reshaping the entire AI infrastructure ecosystem.

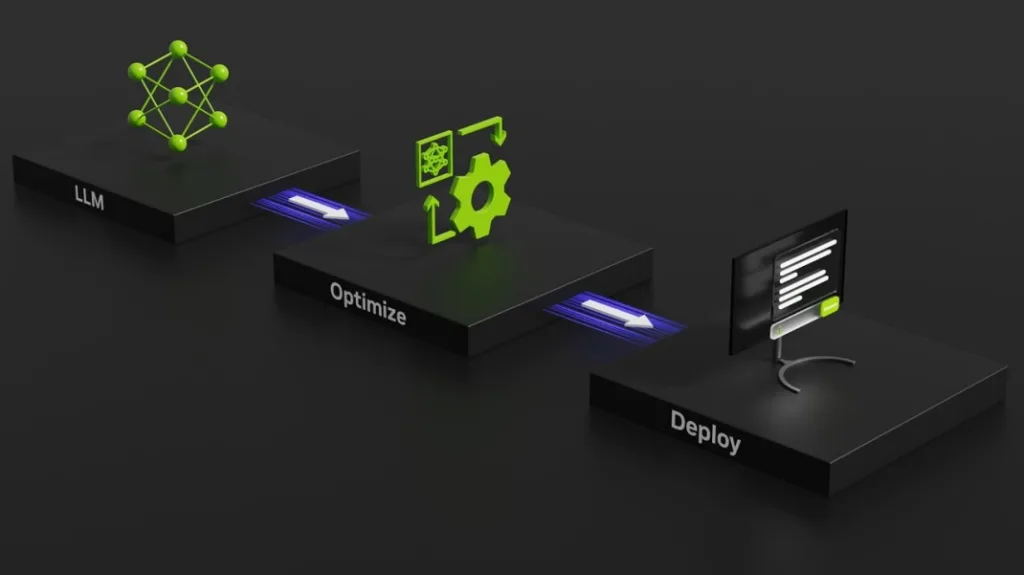

Launched in March 2025, Dynamo represents more than just a technological upgrade; it’s a strategic leap forward in AI computational efficiency. By reimagining how large language models (LLMs) are served and processed, NVIDIA is addressing one of the most pressing challenges in the AI industry: how to maximize performance while minimizing operational costs.

Table of Contents

NVIDIA AI Inference Challenge: More Than Just Computing Power

At its core, AI inference is about translating complex machine learning models into actionable insights. As AI reasoning becomes increasingly prevalent, each model is expected to generate tens of thousands of tokens per prompt – essentially representing its “thinking” process. The challenge? Doing this efficiently and cost-effectively.

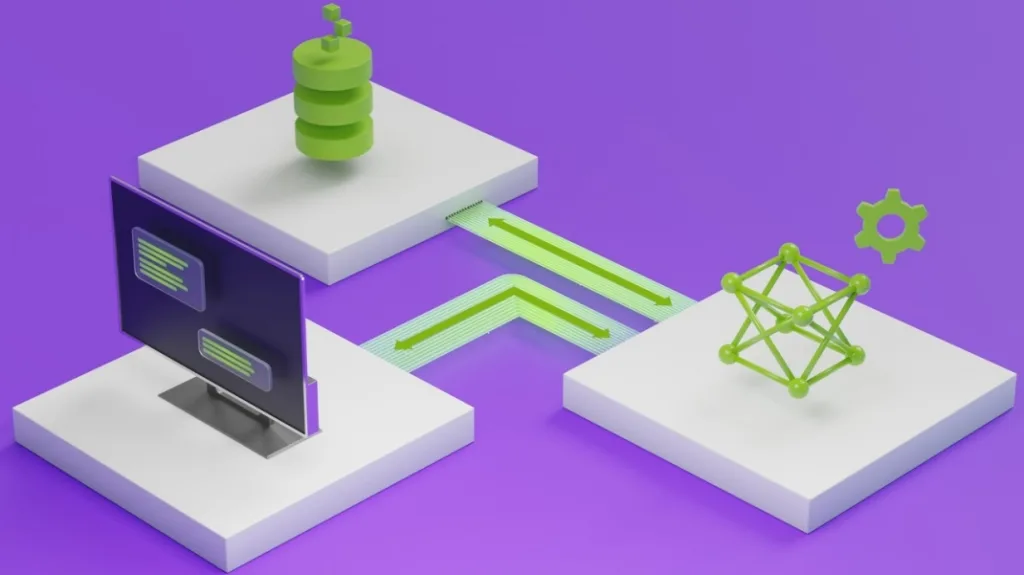

NVIDIA Dynamo tackles this challenge through several innovative approaches:

- Disaggregated Serving: By separating processing and generation phases across different GPUs, Dynamo allows each computational stage to be optimized independently.

- Dynamic GPU Management: The software can add, remove, and reallocate GPUs in real-time, adapting to fluctuating request volumes.

- Intelligent Routing: Dynamo can pinpoint specific GPUs best suited to minimize response computations and efficiently route queries.

Performance Breakthroughs: Numbers That Speak Volumes

The performance metrics are nothing short of impressive:

- Llama Models: Dynamo demonstrated the ability to double performance and revenue on NVIDIA’s Hopper platform

- DeepSeek-R1 Model: When running on GB200 NVL72 racks, Dynamo boosted token generation by over 30 times per GPU

Open-Source Compatibility: Breaking Down Barriers

One of Dynamo’s most significant features is its broad compatibility. The software supports popular frameworks like:

- PyTorch

- SGLang

- NVIDIA TensorRT-LLM

- vLLM

This open approach enables enterprises, startups, and researchers to develop and optimize AI model serving methods across disaggregated inference infrastructures.

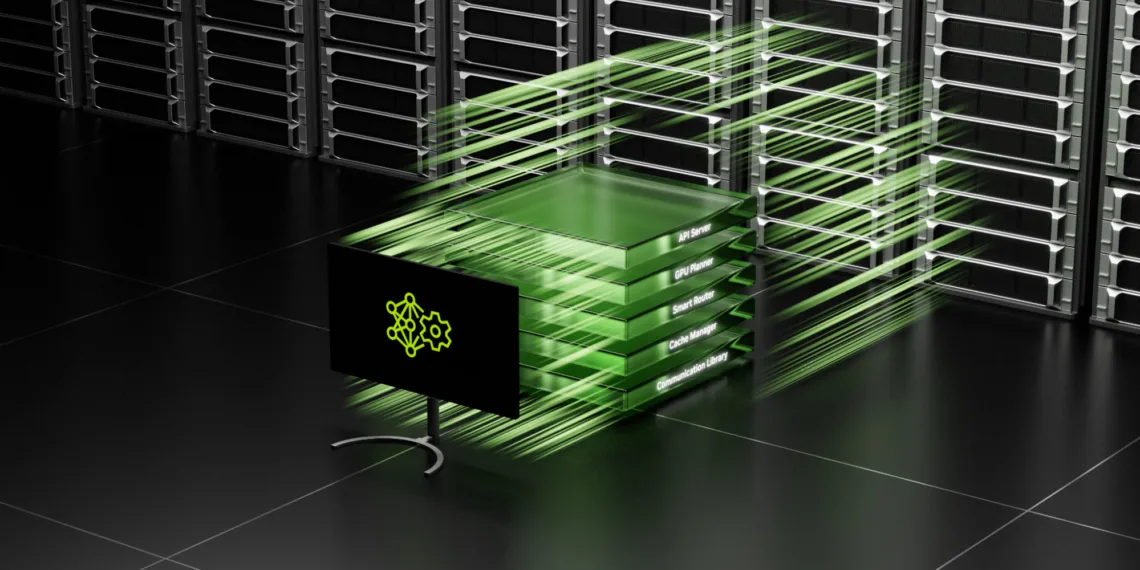

Key Innovations: The Four Pillars of Dynamo

NVIDIA has highlighted four groundbreaking components that set Dynamo apart:

- GPU Planner: Dynamically manages GPU resources based on user demand

- Smart Router: Minimizes costly GPU recomputations

- Low-Latency Communication Library: Accelerates GPU-to-GPU data transfer

- Memory Manager: Seamlessly offloads and reloads inference data

Industry Adoption: Who’s Jumping On Board?

Major players are already exploring Dynamo’s potential, including:

- AWS

- Google Cloud

- Microsoft Azure

- Meta

- Cohere

- Perplexity AI

- Together AI

The Bigger Picture: Democratizing AI Inference

Jensen Huang, NVIDIA’s CEO, frames Dynamo as more than just software. “To enable a future of custom reasoning AI,” he states, “NVIDIA Dynamo helps serve these models at scale, driving cost savings and efficiencies across AI factories.”

Performance Metrics Comparison

| Metric | Traditional Inference | NVIDIA Dynamo |

|---|---|---|

| Token Generation | Baseline | 30x Improvement |

| GPU Utilization | Standard | Optimized |

| Operational Cost | Higher | Reduced |

| Scalability | Limited | Highly Flexible |

The Road Ahead: AI Inference Transformed

NVIDIA Dynamo isn’t just a product; it’s a vision for the future of AI computing. By making inference more efficient, accessible, and cost-effective, it has the potential to accelerate AI adoption across industries.

Elon Musk X Bans Rick Wilson: Free Speech Debate Erupts Over ‘Kill Tesla’ Post

FAQs

Q1: What makes NVIDIA Dynamo different from previous inference servers?

A: Dynamo introduces disaggregated serving, dynamic GPU management, and intelligent routing, offering unprecedented efficiency in AI model processing.

Q2: Is Dynamo compatible with different AI frameworks?

A: Yes, Dynamo supports multiple frameworks including PyTorch, SGLang, NVIDIA TensorRT-LLM, and vLLM.

Q3: How does Dynamo reduce inference costs?

A: Through intelligent GPU allocation, minimizing recomputations, and seamlessly managing memory across different storage devices.