Online benchmarks for the GeForce RTX 4080 16 GB from NVIDIA show a performance increase of over 20% in 3DMark testing. Over at Chiphell Forums, the benchmarks for the NVIDIA GeForce RTX 4080 16 GB graphics card have surfaced. The graphics card’s Founders Edition or AIB status is unknown, however it was powered by an AMD Ryzen 7 5800X3D CPU.

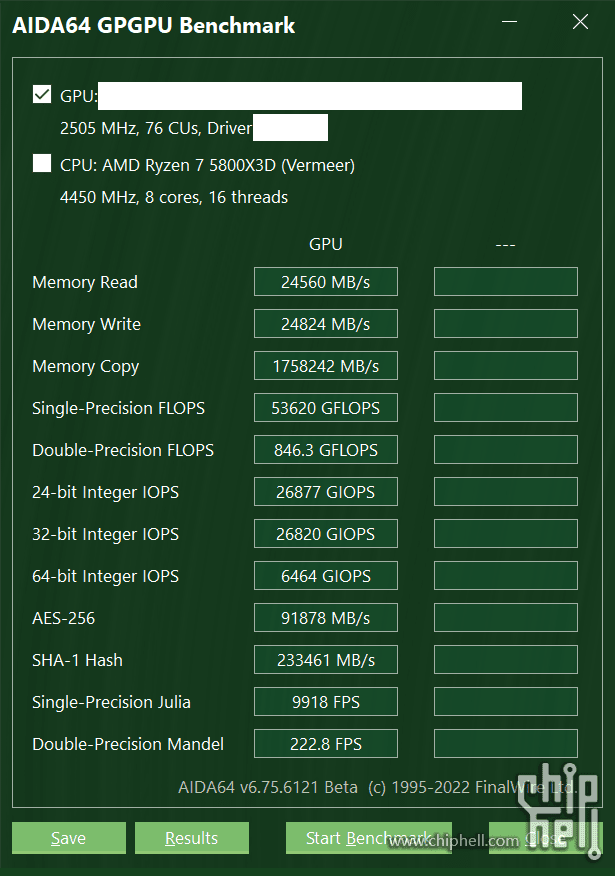

The graphics card was put to the test in 3DMark and a few more games. But first, let’s have a look at the AIDA64 GPGPU Benchmark, which reveals that the card can achieve single-precision performance up to 53.6 TFLOPs, which is 8% more than the 49 TFLOPs officially announced. In terms of single-precision computing, this represents a 32.5% gain over the GeForce RTX 3090 Ti’s 40 TFLOPs.

The NVIDIA GeForce RTX 4080 16 GB performs well in synthetic benchmarks, earning 13977 points in the 3DMark Time Spy Extreme (Graphics), 17465 points in the 3DMark Fire Strike Ultra (Graphics), and 17607 points in the 3DMark Port Royal benchmark.

The user also provided Red Dead Redemption 2 (with and without DLSS) and Shadow of the Tomb Raider performance benchmarks (Full High DLSS Quality). In comparison to the previous version, which offered 80 SMs or 10,240 cores, the NVIDIA GeForce RTX 4080 16 GB graphics card is anticipated to use a scaled-down AD103-300 GPU configuration with 9,728 cores or 76 SMs enabled of the total 84 units. Due to its condensed design, the RTX 4080 might only have 48 MB of L2 cache and fewer ROPs than the complete GPU, which has 64 MB of L2 cache and up to 224 ROPs. The PG136/139-SKU360 PCB is anticipated to serve as the card’s foundation. According to reports, the graphics card has a maximum clock speed of 2505 MHz.

In terms of memory specifications, the GeForce RTX 4080 is predicted to use 16 GB of GDDR6X memory, clocked at 22.5 Gbps across a 256-bit bus interface. A bandwidth of up to 720 GB/s will be made available. Given that it has a 320-bit interface but only a meagre 10 GB capacity, this is still a little bit slower than the 760 GB/s bandwidth provided by the RTX 3080. NVIDIA might be adding a next-generation memory compression suite to make up for the 256-bit interface in order to make up for the reduced bandwidth.

Also Read:

Google to launch its Nest Wifi Pro with Wi-Fi 6E