There have been a variety of reactions to the introduction of the Ada Lovelace GPU and the Nvidia RTX 40-series. Various people responded with excitement, scepticism, and open contempt. Nvidia has gone big—big and expensive—in creating a GPU that promises to outperform the finest graphics cards. On the other side, AMD is utilising a more economical technique that might make its future RDNA cards a more desirable, more inexpensive option.

Although we are not privy to Nvidia’s Bill of Materials (BOM), the company’s reluctance to adopt “Moore’s Law 2.0” and turn to things like chiplets is partly to blame for the high pricing of Nvidia’s GPUs. When AMD transitioned to chiplets, it began to outperform Intel on CPUs, notably in terms of cost, and they are now poised to do the same for GPUs. Since analogue scales very poorly with newer process nodes, putting the analogue memory interfaces on an older process with RDNA 3 is a brilliant idea. Cache operates similarly.

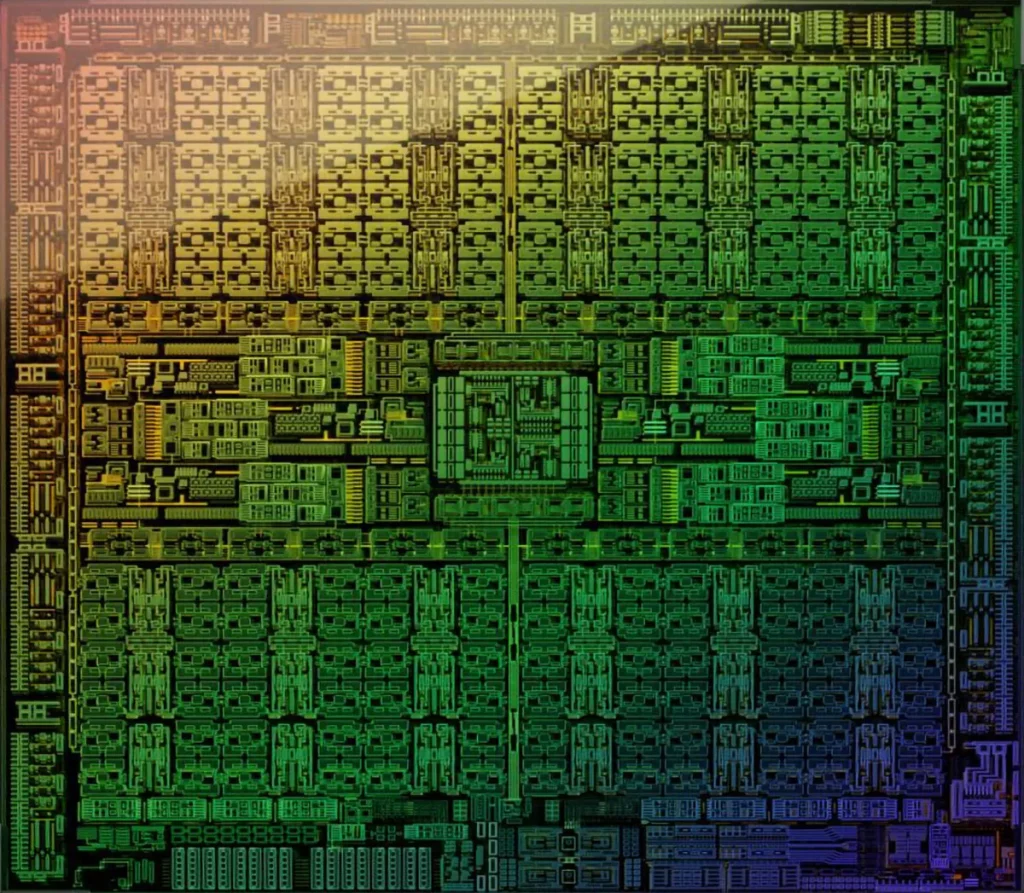

We can see from the AD102 die pictures that Nvidia has so far shared that the die size is 608mm2.

At 628mm2, it is only marginally smaller than GA102, but Nvidia is now using the state-of-the-art TSMC 4N process node in place of Samsung 8N. The price of the wafers definitely increased, and over the past year, we’ve published numerous news about TSMC boosting pricing.

The AD102 die, which is seen in the image below, provides some obvious explanations of how and why Nvidia’s most recent masterpiece costs more than chips from earlier generations. The chip’s twelve Graphics Processing Clusters (GPCs), each of which has 12 Streaming Multiprocessors, are immediately identifiable from the rest of the device (SMs). Together, GPCs and SMs occupy around 45% of the entire die area.

The majority of the die’s outside border is taken up by the 12 32-bit GDDR6X memory controllers, while the bottom edge’s bottom third is occupied by the PCIe x16 connector. Give or take a little, the memory controllers and associated circuitry occupy a sizable 17% of the die area. The memory subsystem, however, is made up of more than just that because Nvidia’s L2 cache is now much bigger than it was in earlier designs.

Nearly all of that will reportedly be moved off the main chiplet via AMD’s MCD (Memory Chiplet Die) strategy utilised with its Radeon RX 7000-series and RDNA 3 GPUs, and it will reportedly employ TSMC N6 instead of TSMC N5 to reduce cost while also improving yields.

Contract negotiations between TSMC and significant partners like Apple, AMD, Intel, or Nvidia are kept a secret.

There are claims that TSMC N5 (and consequently 4N, which is essentially simply “refined” N5) is at least twice as expensive as TSMC N7/N6. For reference, Nvidia can only obtain roughly 90 full dies per wafer with a 608mm2 die size for AD102, which is just about two more chips per wafer than GA102.

If TSMC 4N is more than twice as expensive per wafer as Samsung 8N, then AD102 is more than twice as expensive per chip than the RTX 3090 and GA102 from the prior generation.

Also Read: