Nvidia’s choice to develop its Arm-based server CPUs termed the Grace CPU Superchip and the Grace Hopper Superchip paved the way for the business to develop entire systems that combine CPUs and GPUs. Today, Nvidia revealed at Computex 2022 that dozens of reference systems based on its new Arm CPUs and Hopper GPUs will be available in the first half of 2023 from several key server OEMs.

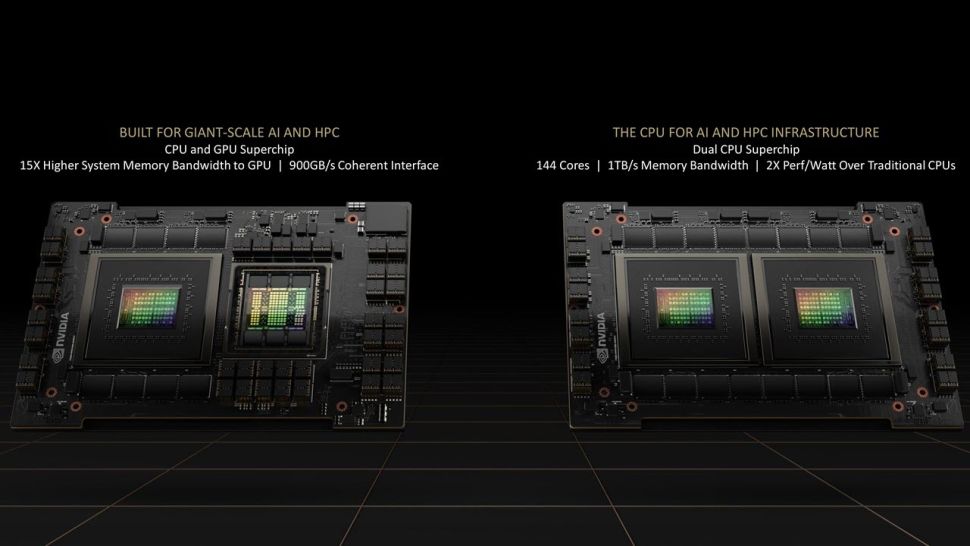

The Grace CPU Superchip is Nvidia’s first CPU-only Arm chip for the data center, and it comes as two chips on one motherboard, whereas the Grace Hopper CPU Superchip combines a Hopper GPU and the Grace GPU on the same board. The Neoverse-based CPUs support the Arm v9 instruction set, and systems include two chips connected by Nvidia’s innovative NVLink-C2C interconnect technology.

Nvidia promises that when the Grace CPU Superchip delivers in early 2023, it will be the fastest processor on the market for a wide range of applications, including hyper-scale computing, data analytics, and scientific computing.

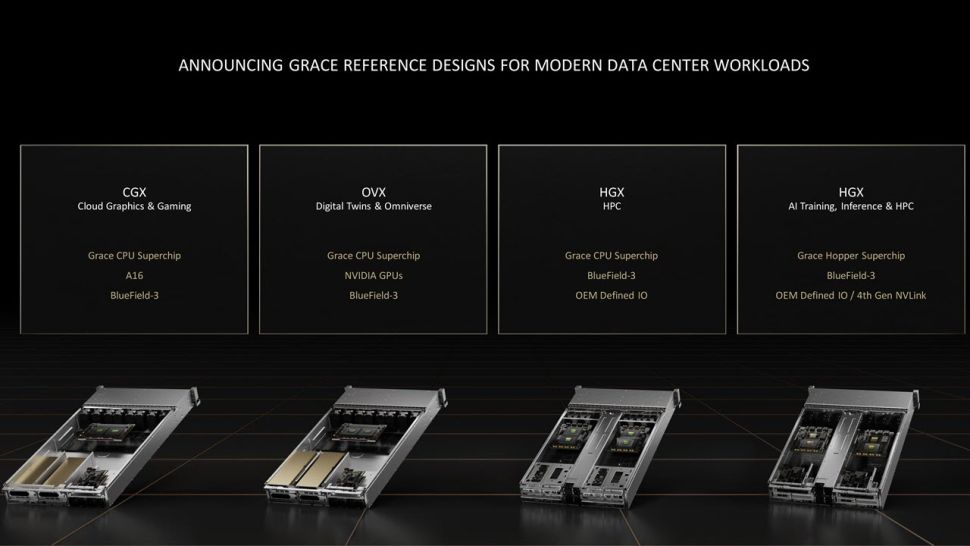

Asus, Gigabyte, Supermicro, QCT, Wiwynn, and Foxconn, among others, have dozens of new reference server designs planned for release in the first half of 2023, indicating that Nvidia’s Grace CPU silicon is on the the track. Each server design will be based on one of Nvidia’s four reference designs, which comprise server and baseboard blueprints. The 1U and 2U form factors will be available, with the former requiring liquid cooling.

The dual-CPU Grace Superchip is combined with Nvidia’s A16 GPUs in the Nvidia CGX system for cloud graphics and gaming applications. The Nvidia OVX servers are built for digital twin and omniverse applications and include a dual-CPU Grace, but they also allow for more flexible pairings with a variety of Nvidia GPU models.

There are two versions of the Nvidia HGX platform

The first is intended for HPC applications and includes only the Grace dual-CPU processor, no GPUs, and OEM-defined I/O choices. Meanwhile, on the far right, the HGX system for AI training, inference, and HPC workloads with the Grace CPU + Hopper GPU Superchip, OEM-defined I/O, and support for fourth-generation NVLink for connections outside the server through NVLink switches is shown.

The NVLink option will be available with Nvidia’s CPU+GPU Grace Hopper Superchip versions, but not with the dual-CPU Grace Superchip. The HGX ‘Grace Hopper’ Superchip blade features a single Grace CPU and the Hopper GPU, with 512 GB of LPDDR5x memory, 80 GB of HBM3, and a total memory throughput of up to 3.5 TB/s. Given the addition of the GPU, this blade has a larger TDP envelope of 1000W and is available with either air or liquid cooling. The HGX Grace Hopper systems can only have 42 nodes per rack because of its larger blade.

Unsurprisingly, Nvidia bundles all of these systems with another key component that is assisting the company’s transition to a solutions provider: the 400 Gbps Bluefield-3 Data Processing Units (DPUs) acquired through the Mellanox acquisition. These chips relieve the CPUs of important tasks, enabling more efficient networking, security, storage, and virtualization/orchestration.

Nvidia already offers CGX, OVX, and HGX systems with Intel and AMD x86 CPUs, and the firm says it will continue to offer those servers and build additional editions with Intel and AMD chips. But it doesn’t imply Nvidia will hold back on performance. The business recently demonstrated its Grace CPU against Intel’s Ice Lake in a weather forecasting comparison, saying that its Arm processor is 2X faster and 2.3X more efficient. Nvidia’s OVX servers are powered by the same Intel CPUs.

AMD has not been spared by the company. In the SPECrate 2017 int base benchmark, Nvidia says that its Grace CPU Superchip is 1.5X faster than the two previous-gen 64-core EPYC Rome 7742 processors it utilizes in its current DGX A100 systems.

also read: