The first benchmarks of AMD’s forthcoming EPYC 7V73X flagship Milan-X CPU were released last week by Chips and Cheese. This week, they’re back with even more numbers demonstrating the performance advantages of 3D V-Cache for forthcoming and next-generation data centre processors.

Chips & Cheese’s prior benchmarks were solely focused on the latency performance of the future Milan-X CPUs, such as the flagship EPYC 7V73X. More latency benchmarks, as well as total bandwidth and workload-specific workloads, have been published this time against the EPYC 7763 CPU. Intel’s Ice Lake and Cascade Lake Xeon CPUs are some of the more interesting comparisons added to their testing binge.

You might be wondering how these are intriguing. Intel’s CPUs are monolithic in design and employ a Mesh architecture for connection, whereas AMD uses a Ring bus architecture. The Ring bus architecture is optimized for lower latency and better bandwidth, whereas Mesh designs sacrifice these benefits in favour of a more scalable chip design, such as Intel’s recent efforts.

As a result, despite having a smaller L2 cache, AMD’s Milan-X and Milan CPUs have reduced latency and provide more bandwidth than Intel’s competitors, thanks to an enhanced L3 cache.

But first, let’s look at workload-specific benchmarks. The AMD EPYC 7V73X Milan-X CPU is used once more. The AMD EPYC 7V73X processor will have 64 cores, 128 threads, and a maximum TDP of 280W. The clock rates will be kept at 2.2 GHz base and 3.5 GHz boost, while the cache will be increased to a whopping 768 MB. This includes the chip’s regular 256 MB of L3 cache, so we’re looking at a total of 512 MB from the stacked L3 SRAM, implying that each Zen 3 CCD will have 64 MB of L3 cache. That’s a 3x improvement over the current EPYC Milan CPUs.

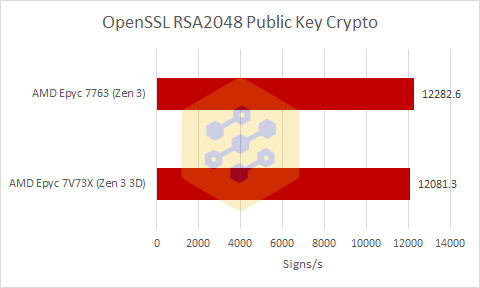

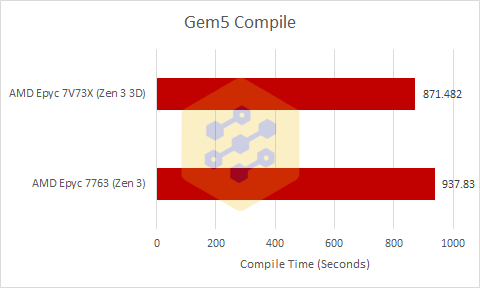

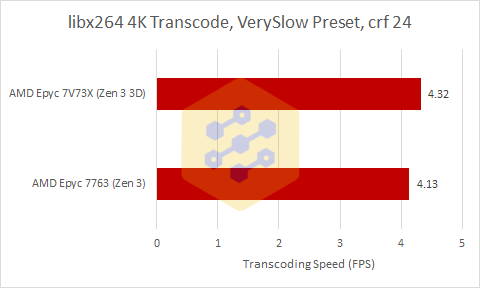

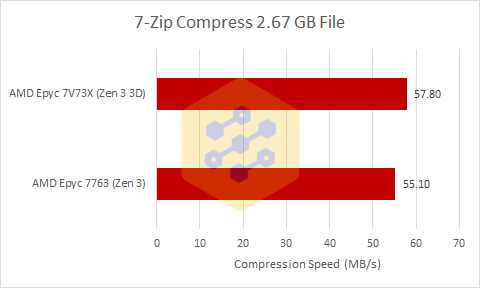

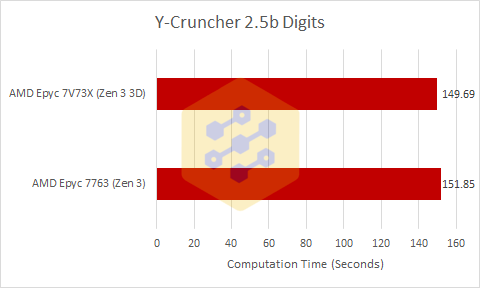

In four of Chips and Cheese’s five benchmarks, the Milan-X EPYC 7V73X CPU came out on top. It only loses to the EPYC 7763 in OpenSSL because the workload does not put any strain on the cache, and the Milan-X CPU runs at a slightly lower but consistent clock when pushing all CCDs, thus the performance loss is to be expected.

When compared to the normal Milan CPU, the Milan-X processor improves performance by 7.6% while running at 5% lower speeds in Gem5. This demonstrates a 12.5 percent boost in iso-clock V-Cache performance, which is excellent for the increased V-Cache.

Other benchmarks indicate similar improvements in performance. Because this is a particularly FPU and memory-dependent test, the AMD EPYC 7V73X Milan-X CPU gives a 1.5 percent performance advantage in Y-Cruncher, however, it is explained that while the Milan-X CPU went to 1-CCD boost speeds and normal Milan didn’t, the 3D V-Cache compensated for the clock loss.

With that stated, Chips and Cheese believe that the 3D V-Cache benefits for AMD’s EPYC Milan-X CPUs are real, and they are excited to see what the future holds for V-Cache technologies. I’ve included their conclusion below, but I encourage that you visit their page to read the whole study on Milan-X.

V-Cache is a very interesting addition to Milan. In our testing, it did not degrade performance except OpenSSL where the performance difference was a wash because it’s completely compute-bound. And in some tests, V-Cache did add performance to the already very performant Milan.

V-Cache is also a large technical achievement for AMD considering that the latency increase of 3 to 4 cycles is trifling considering the tripling of the L3. On the bandwidth front, while AMD did sacrifice Milan’s lack of variation of L3 bandwidth for around 25% more single-threaded bytes per cycle bandwidth but 10% less whole CCD bandwidth with Milan-X, that is similar behavior to previous generation server CPUs from AMD and even with the slight bandwidth reduction, it blows Intel’s L3 bandwidth out of the water.

All in all, V-Cache is both a very interesting technology and a decent performance enhancer which makes me very excited for what AMD does next with the technology.

via Chips and Cheese

also read:

AMD Ryzen CPUs reduces Tesla Model 3’s Driving Range by effectively 22 kilometres