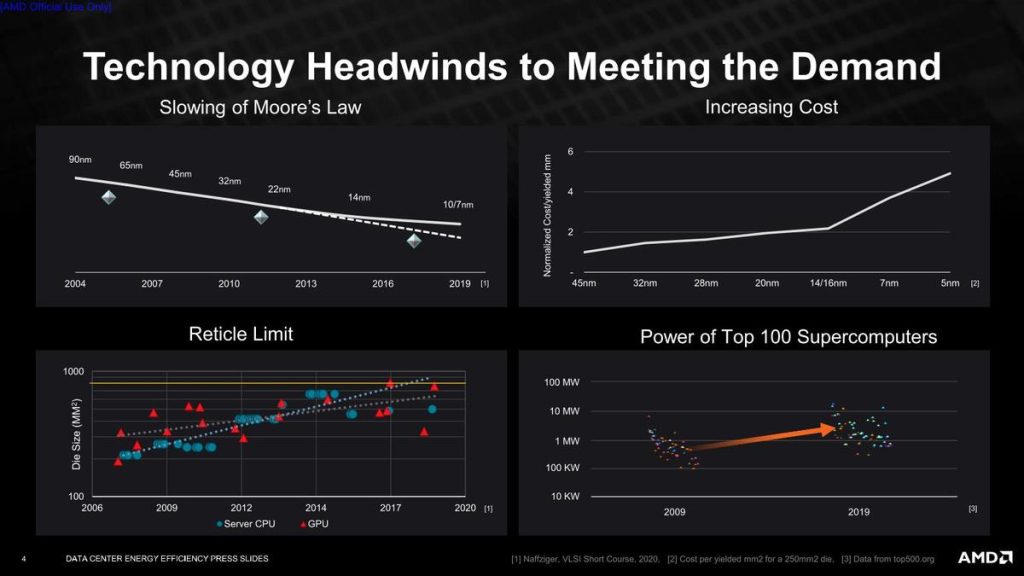

AMD is planning to bring about thirty times more energy efficiency for their EPYC CPUs and Instinct accelerators for AI training and HPC, or High-Performance Computing, programs that are processed by accelerated computational nodes. And for this reason, the company is dedicated and has announced that it has set aside some specific goals to meet this peak.

The expected start date for this task will be no sooner than the year 2025 and the gains will be seen in AMD’s high-processing CPUs, their efficient and powerful GPU accelerators which they utilize for AI training, and HPC accelerated CPU configurations.

“Achieving gains in processor energy efficiency is a long-term design priority for AMD and we are now setting a new goal for modern compute nodes using our high-performance CPUs and accelerators when applied to AI training and high-performance computing deployments. Focused on these very important segments and the value proposition for leading companies to enhance their environmental stewardship, AMD’s 30x goal outpaces industry energy efficiency performance in these areas by 150% compared to the previous five-year time period.”

— Mark Papermaster, Executive Vice President, and CTO, AMD

To achieve these tasks, AMD will require to increase the power efficiency of their computational nodes at a rate that is more than 2.5 times faster than the standard set by the industry over the last five years.

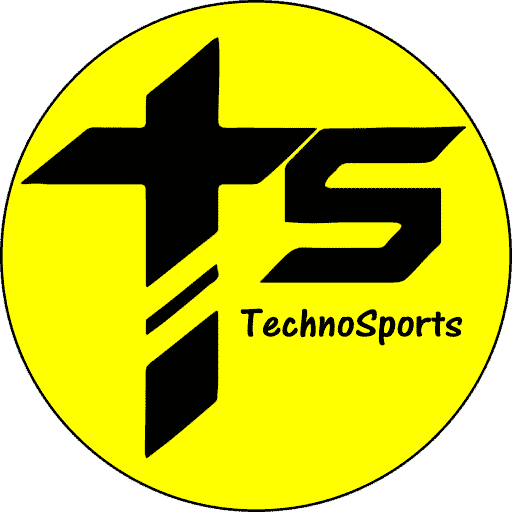

“With computing becoming ubiquitous from edge to core to cloud, AMD has taken a bold position on the energy efficiency of its processors, this time for the accelerated compute for AI and High-Performance Computing applications. Future gains are more difficult now as the historical advantages that come with Moore’s Law have greatly diminished. A 30-times improvement in energy efficiency in five years will be an impressive technical achievement that will demonstrate the strength of AMD technology and their emphasis on environmental sustainability.”

— Addison Snell, CEO of Intersect360 Research

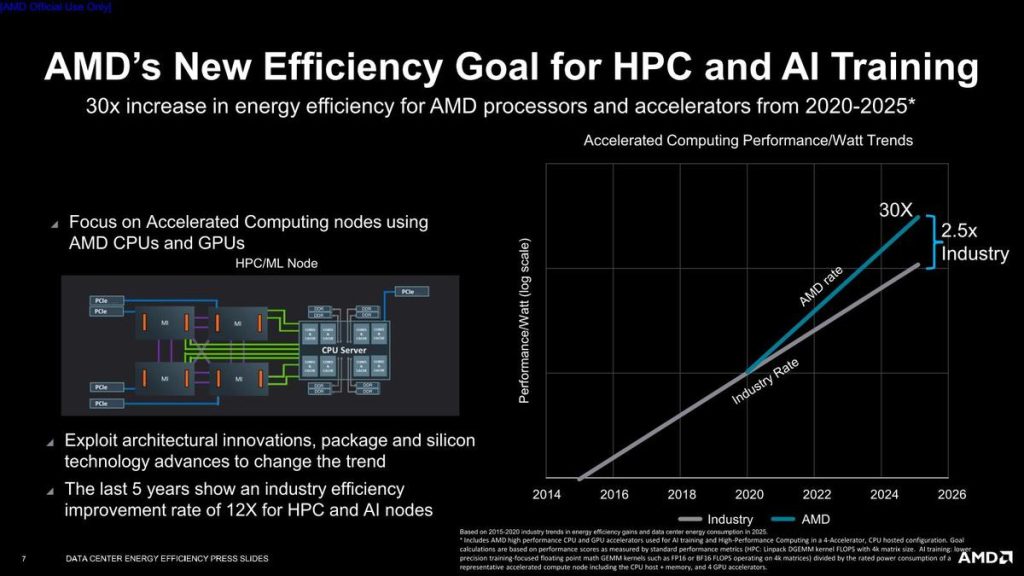

We all know that the power of Accelerated compute nodes is extremely high and they are also extremely advanced. And it is for this reason they are used in most of the advanced systems across the world. From being used for research and supercomputer tests that most standard systems would not be able to process, many scientists even utilize these accelerated computational nodes to create discoveries and breakthroughs in several fields including climate estimations and alternative energy solutions.

” AMD’s plan would save several billions of kilowatt-hours of electricity by the year 2025. The reduction of utilized power would be able “to complete a single calculation by 97% over five years.”

“The energy efficiency goal set by AMD for accelerated compute nodes used for AI training and High-Performance Computing fully reflects modern workloads, representative operating behaviors, and accurate benchmarking methodology.”

— Dr. Jonathan Koomey, President of Koomey Analytics

AMD is also planning to use its “segment-specific datacenter power utilization effectiveness (PUE) with equipment utilization taken into account.” Their power consumptions for both CPUs and GPUs are set on specific segment utilization percentages and they are multiplied by PUE to determine actual energy use for the calculation of the performance per watt.

If all goes well, we can expect AMD to achieve its goals by 2025.