The official details are in and we now have the information about Intel’s next-generation Sapphire Rapids-SP CPU lineup. This lineup will be a part of the 4th Generation of the Xeon Scalable family.

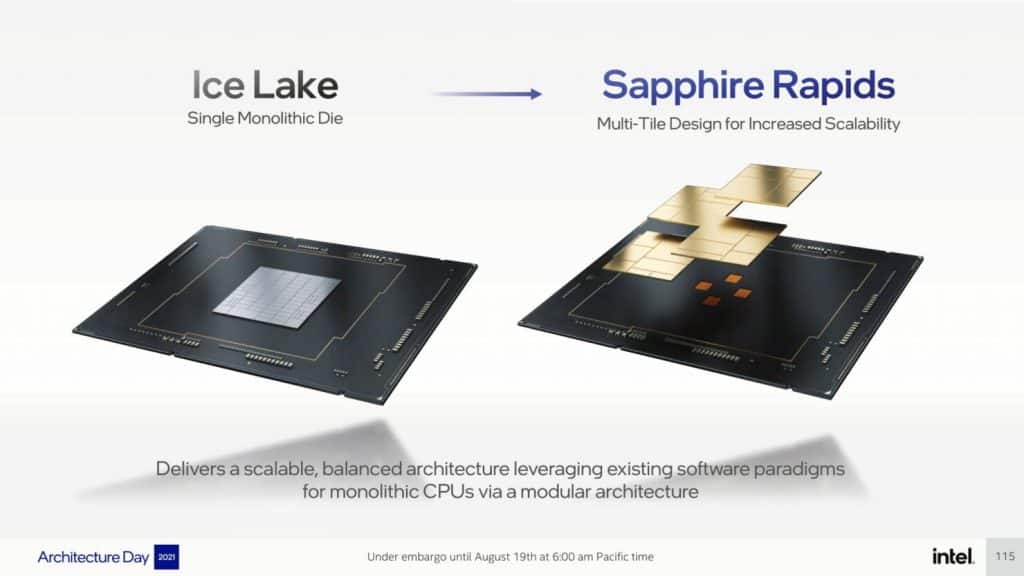

According to the information released by Intel, the next-generation Sapphire Rapids-SP CPU lineup will consist of a range of new technologies with the most important being the seamless integration of multiple chiplets or ‘Tiles’.

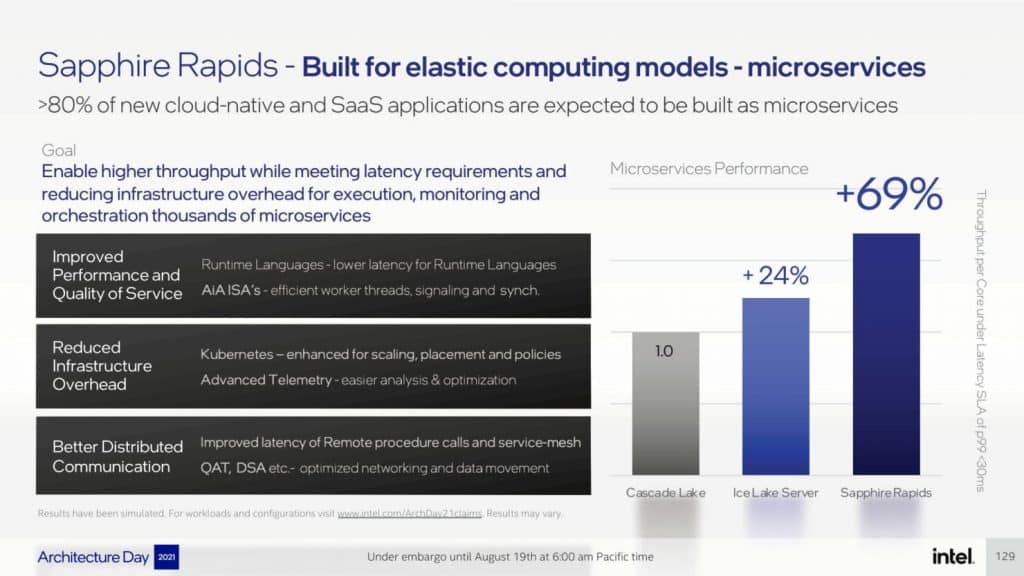

The lineup will replace the Ice Lake-SP family and will go all on board with the ‘Intel 7’ process node. Sapphire Rapids will also be featuring the performance-optimized Golden Cove core architecture that delivers a 20% IPC improvement over Willow Cove core architecture.

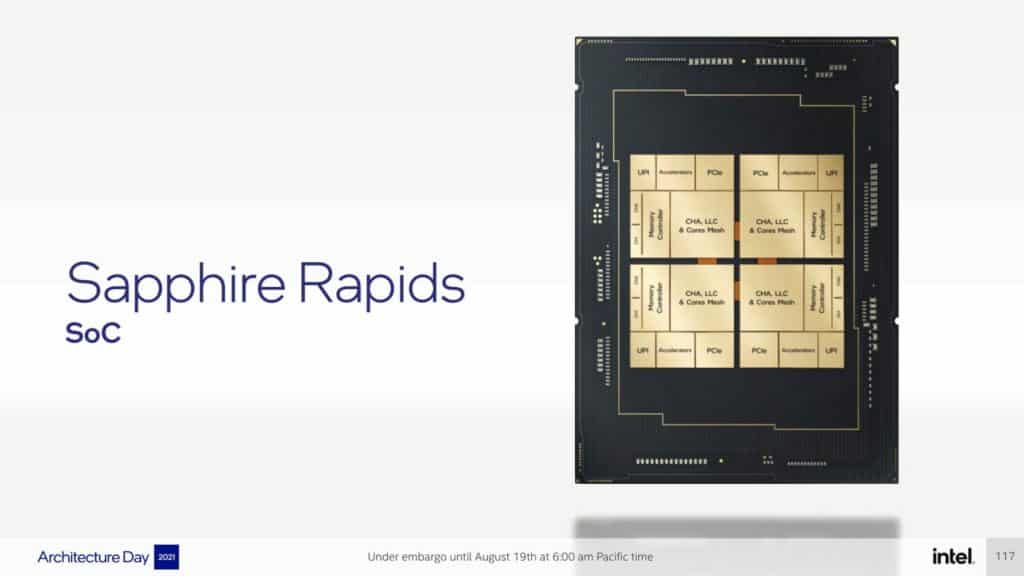

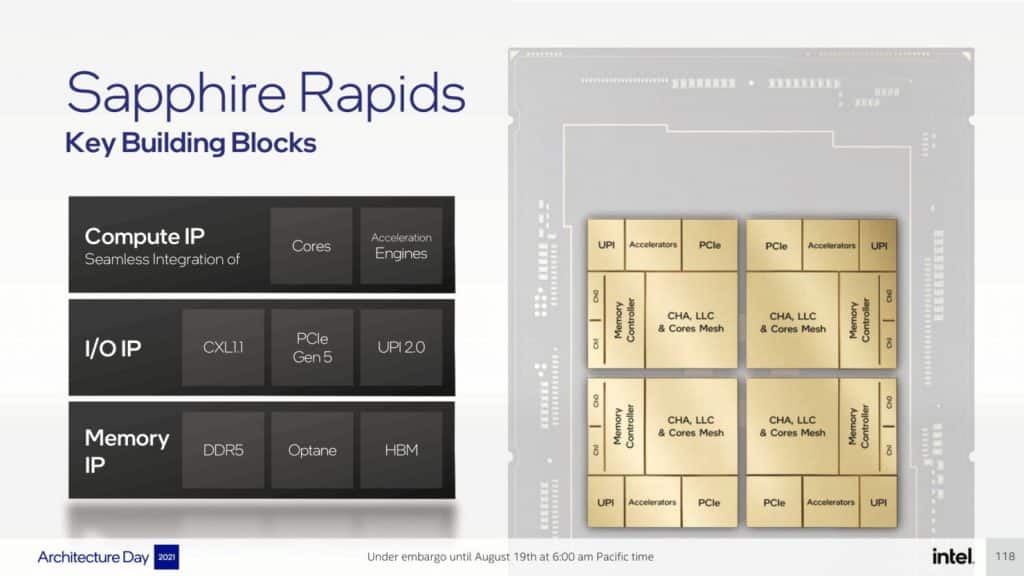

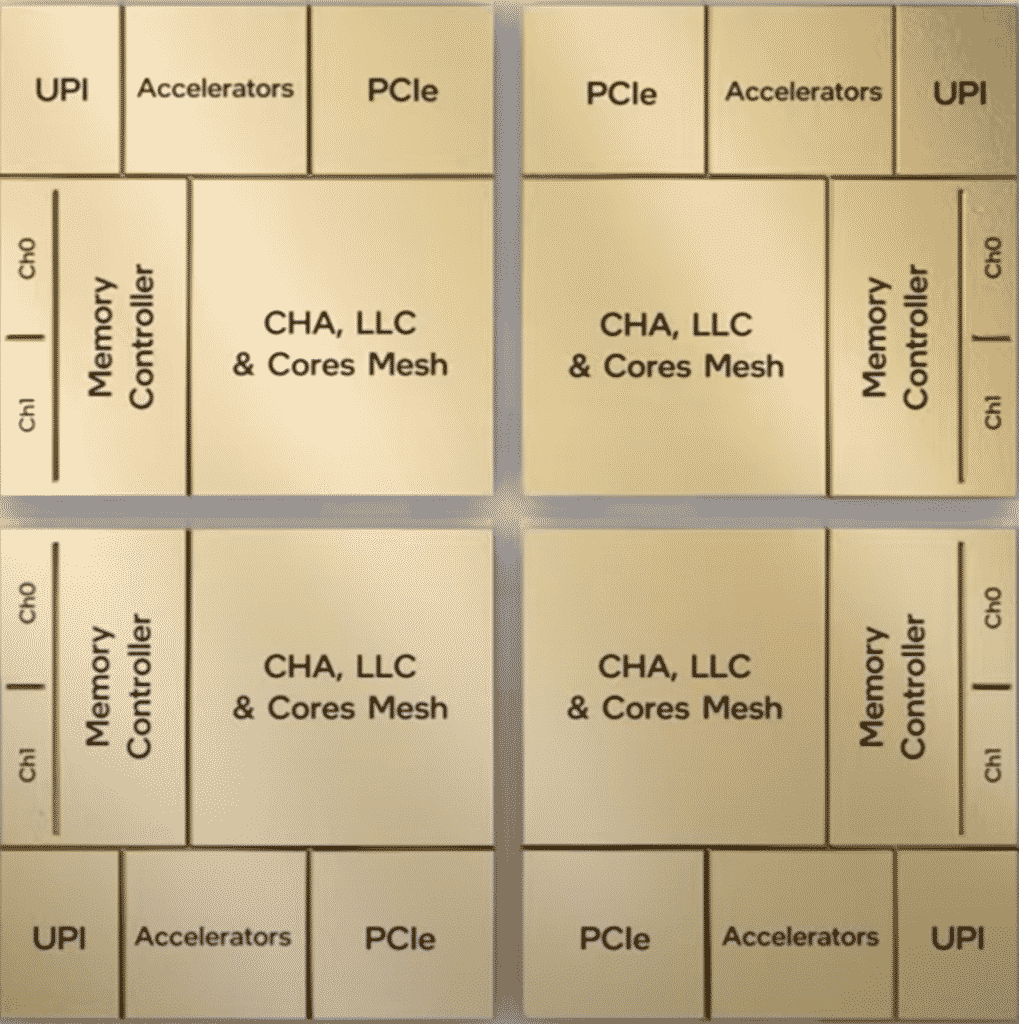

For this next-generation server processor, Intel has used a quad multi-tile chiplet design coming in HBM and non-HBM flavours. The chip acts as one singular SOC and each thread has full access to all resources on all tiles. This provides low latency and high cross-section bandwidth across the entire SOC. Each tile is further composed of three main IP blocks & which are detailed below:

Compute IP

- Cores

- Acceleration Engines

I/O IP

- CXL 1.1

- PCIe Gen 5

- UPI 2.0

Memory IP

- DDR5

- Optane

- HBM

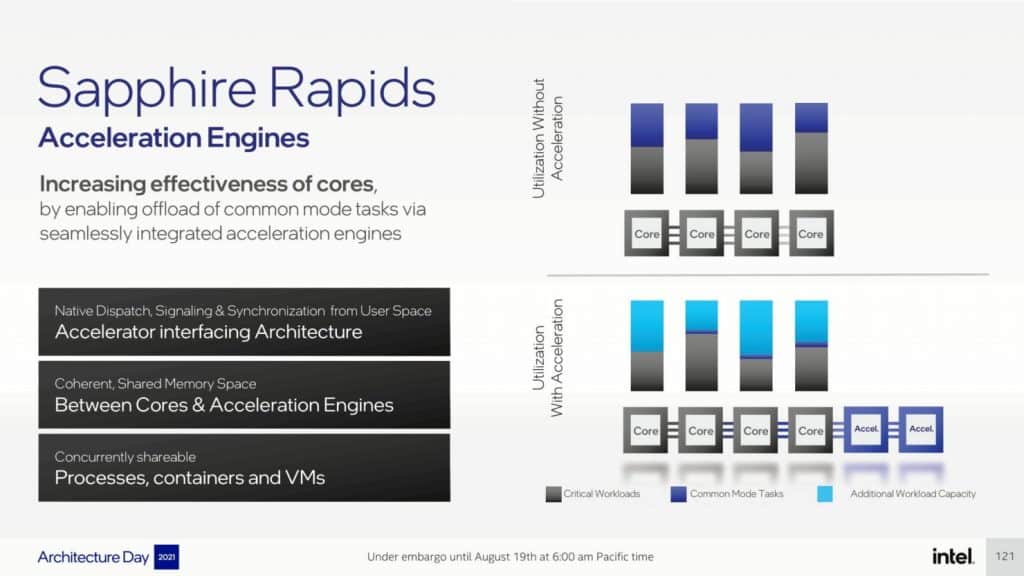

the key changes which the Sapphire Rapids will bring to the data centre platform include AMX, AiA, FP16, and CLDEMOTE capabilities. The Accelerator Engines is promised to increase the effectiveness of each core by offloading common-mode tasks. This will increase performance & decrease the time taken to achieve the necessary task.

Coming to the I/O advancements, Intel has introduced CXL 1.1 to offers accelerator and memory expansion in the data centre segment. There’s also an improved multi-socket scaling via Intel UPI that delivers up to 4 x24 UPI links at 16 GT/s and a new 8S-4UPI performance-optimized topology. This new tile architecture design boosts the cache beyond 100 MB along with Optane Persistent Memory 300 series support.

Intel also announced that its Sapphire Rapids-SP CPUs can house up to four HBM packages, all offering significantly higher DRAM bandwidth than a baseline Sapphire Rapids-SP Xeon CPU with 8-channel DDR5 memory. The support for HBM memory will offer both increased capacity and bandwidth.

Intel Sapphire Rapids-SP Xeon CPU Platform

coming to the Sapphire Rapids-SP Xeon lineup, this will make use of 8 channel DDR5 memory offering speeds of up to 4800 Mbps & support PCIe Gen 5.0 on the Eagle Stream platform. This will introduce the LGA 4677 socket replacing the LGA 4189 socket for the upcoming Cedar Island & Whitley platform housing the Cooper Lake-SP and Ice Lake-SP processors, respectively.

The top-end unit of the lineup will feature 56 cores with a TDP of 350W and will be a low-bin split variant meaning that it will be using a tile or MCM design. The Sapphire Rapids-SP Xeon CPU will be composed of a 4-tile layout with each tile featuring 14 cores each.

Following are the leaked configurations:

- Sapphire Rapids-SP 24 Core / 48 Thread / 45.0 MB / 225W

- Sapphire Rapids-SP 28 Core / 56 Thread / 52.5 MB / 250W

- Sapphire Rapids-SP 40 Core / 48 Thread / 75.0 MB / 300W

- Sapphire Rapids-SP 44 Core / 88 Thread / 82.5 MB / 270W

- Sapphire Rapids-SP 48 Core / 96 Thread / 90.0 MB / 350W

- Sapphire Rapids-SP 56 Core / 112 Thread / 105 MB / 350W

The Intel Saphhire Rapids CPUs will contain 4 HBM2 stacks with a maximum memory of 64 GB (16GB each). The company will be launching their Sapphire Rapids most probably in 2022 followed by HBM variants that are expected to launch around 2023.

Intel Xeon SP Families:

| Family Branding | Skylake-SP | Cascade Lake-SP/AP | Cooper Lake-SP | Ice Lake-SP | Sapphire Rapids | Emerald Rapids | Granite Rapids | Diamond Rapids |

|---|---|---|---|---|---|---|---|---|

| Process Node | 14nm+ | 14nm++ | 14nm++ | 10nm+ | Intel 7 | Intel 7 | Intel 4 | Intel 3? |

| Platform Name | Intel Purley | Intel Purley | Intel Cedar Island | Intel Whitley | Intel Eagle Stream | Intel Eagle Stream | Intel Mountain Stream Intel Birch Stream | Intel Mountain Stream Intel Birch Stream |

| MCP (Multi-Chip Package) SKUs | No | Yes | No | No | Yes | TBD | TBD (Possibly Yes) | TBD (Possibly Yes) |

| Socket | LGA 3647 | LGA 3647 | LGA 4189 | LGA 4189 | LGA 4677 | LGA 4677 | LGA 4677 | TBD |

| Max Core Count | Up To 28 | Up To 28 | Up To 28 | Up To 40 | Up To 56 | TBD | Up To 120? | TBD |

| Max Thread Count | Up To 56 | Up To 56 | Up To 56 | Up To 80 | Up To 112 | TBD | Up To 240? | TBD |

| Max L3 Cache | 38.5 MB L3 | 38.5 MB L3 | 38.5 MB L3 | 60 MB L3 | 105 MB L3 | TBD | TBD | TBD |

| Memory Support | DDR4-2666 6-Channel | DDR4-2933 6-Channel | Up To 6-Channel DDR4-3200 | Up To 8-Channel DDR4-3200 | Up To 8-Channel DDR5-4800 | Up To 8-Channel DDR5-5200? | TBD | TBD |

| PCIe Gen Support | PCIe 3.0 (48 Lanes) | PCIe 3.0 (48 Lanes) | PCIe 3.0 (48 Lanes) | PCIe 4.0 (64 Lanes) | PCIe 5.0 (80 lanes) | PCIe 5.0 | PCIe 6.0? | PCIe 6.0? |

| TDP Range | 140W-205W | 165W-205W | 150W-250W | 105-270W | Up To 350W | TBD | TBD | TBD |

| 3D Xpoint Optane DIMM | N/A | Apache Pass | Barlow Pass | Barlow Pass | Crow Pass | Crow Pass? | Donahue Pass? | Donahue Pass? |

| Competition | AMD EPYC Naples 14nm | AMD EPYC Rome 7nm | AMD EPYC Rome 7nm | AMD EPYC Milan 7nm+ | AMD EPYC Genoa ~5nm | AMD Next-Gen EPYC (Post Genoa) | AMD Next-Gen EPYC (Post Genoa) | AMD Next-Gen EPYC (Post Genoa) |

| Launch | 2017 | 2018 | 2020 | 2021 | 2022 | 2023? | 2024? | 2025? |