The graphics giant announced a beastly workstation GPU for its wide range of customers back in November last year in the form of an A100 GPU based on Ampere architecture. Now 7 months later, NVIDIA has announced an A100 variant with a PCI Express interface and 80GB of HBM2e memory.

The new GPU features the exact same features as the regular A100, except on a standard interface, however comes with lower TDP. Interestingly, NVIDIA has retained it on 7nm Ampere GA100 GPU featuring 6192 CUDA cores while the bandwidth has been increased to 2039 GB/s (a difference of 484 GB/s than the A100 40GB).

With such high speed 80GB memory and with such huge amount of CUDA cores, you find this GPU to be a perfect one for high-performance computing to accelerate training using deeplearning algorithms.

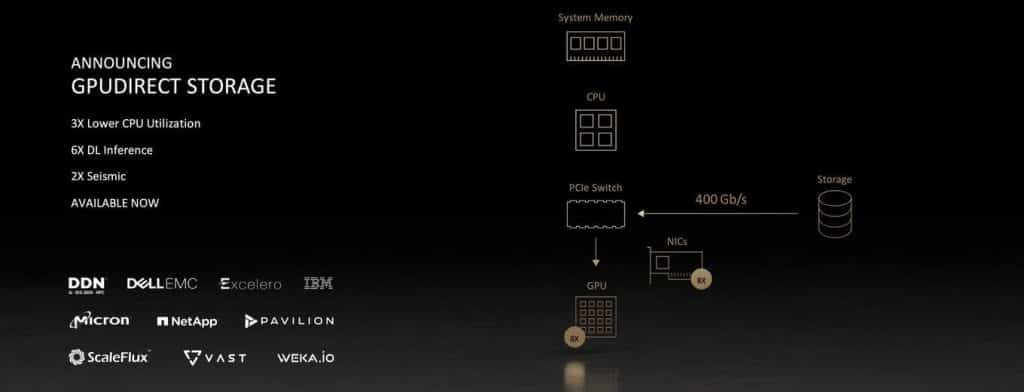

Taking this even further, NVIDIA has even launched its GPUDirect Storage feature, similar to the Microsoft DirectStorage technology found on consumer-based Xbox consoles and soon on Windows 11. The tech helps give fast NVMe storage access that can boost loading times in certain workloads like gaming for consumers.

However, NVIDIA’s technology will also be looking to adopt a similar prospect except for access to a large memory pool on the GPU, here, a whopping 80GB of faster HBM2e memory. Surely, many server manufacturers like Dell, Lenovo, Gigabyte and others will be looking to use the new NVIDIA A100 PCIe accelerator.